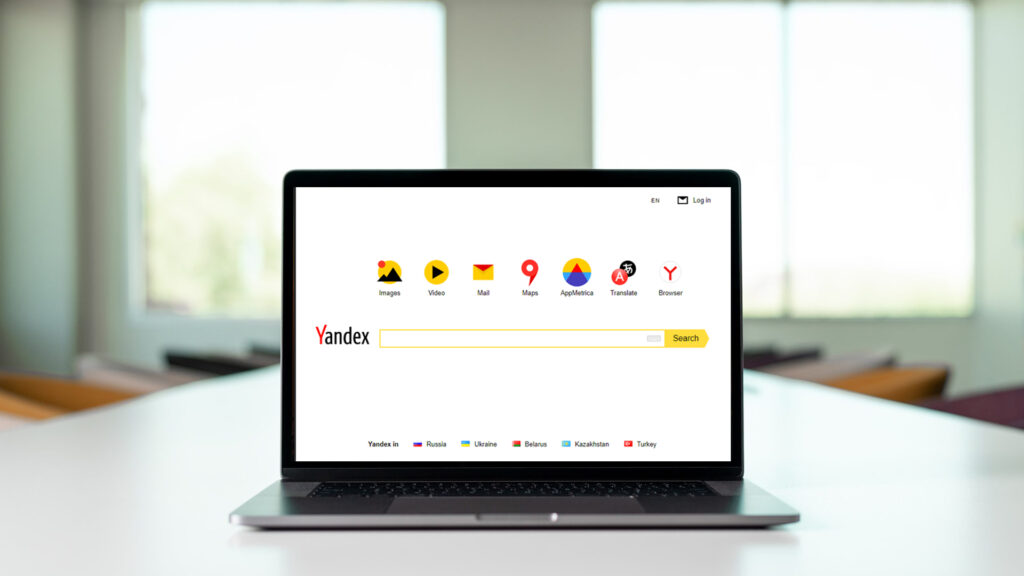

Yandex is the fourth-largest search engine in the world, and its major market share is in Russia. On January 27th, 2023, it became the victim of a data leak that ranks among the largest that a tech company has encountered in recent times. The worst part is that this is the second Yandex leak in close to a decade.

A former Yandex employee attempted to sell the Yandex search engine code on the black market for $30,000 in 2015.An initial Yandex leak that emerged revealed 1922 ranking factors, of which 64 percent were listed as depreciated or unused. Though the leak was termed “kernel,” more files were found in combination with 17,800 ranking components. When it comes to SEO for Yandex, most of it is applicable.

Yandex, like Google, has always been transparent about algorithm updates and changes, though in recent years it has adopted machine learning. A few of the notable updates from the last two to three years include

- Y2K update (late 2022)

- YI update (introducing YATI)

- Vega (which doubles the size of the index)

- adoption of index flow

- An expected update to the PF filter and a new rollout

At a personal level, this Yandex leak replicates a second Christmas. Since January 2020, news websites have covered Yandex SEO with search news in Russia with 600+ articles, as this has proven to be the hobby site’s peak event. It is the right time to test how Yandex’s public statements match with the codebase secrets.

Though Yandex is primarily known for its presence in Russia, it has to be stated that the search engine has a presence in Georgia, Turkey, and Kazakhstan. There exists a general feeling that the data leak was politically motivated and incorporated a number of code fragments from the monolithic Yandex Arcadia repository. There was close to 44 GB of leaked data containing information related to Yandex, which includes apps, mail, search, and discs along with the cloud.

What are the views of Yandex?

Yandex has publicly stated that as of January 31st, 2023,

- The contents of the leaked code database correspond to the outdated version of the repository as it differs from the current version used by the services.

- It is important to consider that the published code fragments are known to contain algorithms that were only used along with Yandex, which rectifies the correct operation when it comes to the services.

How much of the code can be actively used is a different question altogether.

Yandex has revealed that during its audit and investigation, it found a number of errors that were in violation of its own principles. So, it is likely that the portions of the leaked code that are currently in use may change in the future.

Factor classification

Yandex classifies its ranking factors into three major components. In Yandex SEO public documentation, it has been specified for the first time. It aids in better understanding of the ranking factor leak.

- Static factors are the factors that are directly related to the website. Examples are inbound internal links, inbound links, headers, etc.

- dynamic factors—factors that are related to the website and the search query. Examples are keyword relevance and keyword inclusions.

- Use search-related factors: the factors related to a user query (the exact location of the user, intent modifiers, and the query language).

Yandex’s leak learnings up to this point

From the data obtained so far, some of the learnings and affirmations that we have been able to make are A lot of data exists in the leak, and we are likely to come across new connections and things in the coming weeks. They include

- Yandex still uses meta keywords that are mentioned in the documentation.

- There is nothing new to suggest that Yandex can crawl JavaScript, which is still outside the publicly documented processes.

- Much like Google’s Search Quality Rater Guidelines define strict rules for Your Money or Your Life (YMYL) content, the leak revealed that Yandex also has specific ranking factors for legal, medical, and financial topics.

- For ranking consideration, the time of the day is considered.

- Server errors and excessive 4x factors can have an impact on rankings.

Matrix Net

Matrix Net is mentioned in a few of the ranking factors; it was announced in 2009 but was superseded by CatBoast in 2017, which was rolled out across the Yandex product sphere. This further substantiates the comments from Yandex, and one of the factors was an outdated code repository.

It was introduced as a new core algorithm that took into consideration numerous ranking factors and assigned weights based on the user query, the actual search query, and the perceived intent. It can be compared to the early version of Google Rank Brain, which is indeed a couple of systems. Matrix Net has been built upon, which is not surprising considering that it is 14 years old.

In 2016, Yandex went on to introduce the Palekh algorithm, which used deep neural networks to better match the documents and queries, even if they did not contain the right levels of common keywords but satisfied the user’s intent. Palekh was capable of processing 150 pages at a time.

URL and page-level factors

From the Yandex leak, it takes into consideration URL construction specifically.

- The presence of numbers in the URL

- Similar to Google Search Central’s best practices for URL structure, if there are too many trailing slashes in the URL, they will be penalized or removed.

- The number of capital letters in the URL is a factor.

The document’s age with the last updated date is also important, as this makes sense. Apart from that, a number of data points relate to its freshness, more so for news-related queries. Earlier Yandex used timestamps for ranking purposes, but for reordering purposes, this is to be classified as “unused.”

Backlink importance

Yandex is known to have algorithms to combat link manipulation that are very similar to Google’s official spam policies regarding link spam, and it has been filtering these since the Nepot filter in 2005. From undertaking a review of the backlink factors to some of the basics that are mentioned in the descriptions, the assumption for building links related to Yandex may relate to the following:

- The links are to be built with varying amounts and natural frequencies.

- The links are to be built with branded anchor texts and commercial keywords.

- If you are buying links, do not buy links from websites that have mixed topics.

Below is an affirmation that is a confirmation of the best practices.

- The age of the backlink is an important factor.

- Topic-based link relevance

- Link relevance is based on the quality of each link.

- Link relevance that takes into account the quality of each link and the topic of each link

- The percentage of inbound links with quality keywords

But there are some link-related factors that are additional considerations when planning, monitoring, and interpreting backlinks.

- The ratio of good versus bad backlinks to a website

- The frequency of links to the site

- • The number of incoming SEO trash links between the hosts

The data connection also revealed that there will be about 80 active components in the spam link calculator. when taking into consideration that there are a number of deprecated factors. This goes on to create a question on how Yandex Seo works, as it would outline how bad a link is. On the other hand, a negative SEO attack is likely to be a short burst. Machine learning models are used by Yandex to validate PBN and sponsored links. The assumption regarding link velocity and the time frame during which it is acquired is the same.

The paid-over links are generated over a longer period of time, and these patterns were introduced to combat.

On-page advertising

When it comes to advertising on the page, there are a number of factors to consider, and a few of them would be deprecated. It is not known from the description itself or what the thought process was, but the point to consider is how much Google would embrace it if ads obscured the main content of the page.

The Yandex mechanisms took into consideration the Proxima update in the ratio of useful and advertising content on a page.

Is it possible to apply Yandex’s learnings to Google?

Google and Yandex are disparate search engines with a number of differences. This is despite the thousands of engineers who have worked for both companies. It is due to the fight for talent, though a few of the master builders and engineers have built things in a similar fashion.

The views of the Russian SEO pros when it comes to these leaks

Just like professionals worldwide, Russian SEO professionals have their own say on the leaks across numerous Runet forums. A point to consider is number of conclusions and findings from this data match up to the western world SEO and their findings.

For more such blogs, Connect with GTECH.

FAQs: The Yandex Search Ranking Leak

Q1: What is the Yandex leak?

The Yandex leak occurred in January 2023 when a massive data breach exposed the internal source code of the Russian search engine. The leaked files revealed 1,922 individual ranking factors used by Yandex to evaluate and rank websites in its search results. If you’re looking for expert guidance on understanding such complex ranking signals, working with a reputable SEO company in Dubai can help you navigate these technical insights effectively.

Q2: Does Yandex still use meta keywords for ranking?

Yes. Surprisingly, the leaked documentation confirmed that Yandex still actively uses meta keywords as a ranking signal. This is a major difference from Google, which officially stopped using meta keywords for ranking purposes over a decade ago.

Q3: How does Yandex classify its search ranking factors?

Yandex classifies its ranking components into three major categories. These include static factors directly tied to the website (like internal links), dynamic factors relating the site to a search query (like keyword relevance), and user-search factors (like the searcher’s exact location).

Q4: Do server errors affect my Yandex rankings?

Yes, excessive server errors and 4xx status codes have a direct negative impact on your rankings. The leak confirmed that Yandex penalizes websites with frequent server downtime or broken pages, making robust technical site maintenance absolutely crucial.

Q5: What is the Yandex MatrixNet algorithm?

MatrixNet is a machine-learning algorithm introduced by Yandex in 2009. It was designed to assign different weights to ranking factors based on perceived user intent. Although the leak referenced it heavily, Yandex actually superseded MatrixNet with a newer algorithm called CatBoost in 2017.

Q6: Does URL structure matter for Yandex?

Yes, the leak revealed that Yandex pays close attention to specific URL-level factors. The search engine specifically looks at the presence of numbers in a URL, the number of capital letters used, and automatically removes excessive trailing slashes.

Q7: How important are backlinks for Yandex rankings?

Backlinks remain a highly important ranking factor for Yandex. The algorithm evaluates the age of the backlink, topic-based link relevance, and the overall ratio of good versus bad backlinks pointing to your website to determine your site’s authority.

Q8: Can buying cheap links hurt my Yandex rankings?

Absolutely. Yandex uses advanced machine learning models and spam link calculators to identify and devalue artificial link building. The algorithm specifically penalizes links bought from mixed-topic websites and unnatural link velocity patterns designed to manipulate search results.

Q9: Does Yandex penalize websites for having too many ads?

Yes. The leaked documents showed that Yandex analyzes the ratio of useful content compared to advertising content on a page. If on-page advertisements obscure the main content or ruin the user experience, the page’s ranking will be negatively impacted.

Q10: Can I use the Yandex leak to improve my Google SEO?

While Google and Yandex are completely separate search engines, they share many fundamental machine-learning principles. Analyzing the Yandex leak provides a rare, technical look into how modern search engines evaluate trust, links, and content. A professional SEO agency in Dubai can use these insights to build stronger, search-agnostic optimization strategies that work across multiple platforms.

Related Post

Publications, Insights & News from GTECH